Welcome To Our Centre

Ghana-India Kofi Annan Centre of Excellence in ICT (GI-KACE) is Ghana’s first Advanced Information Technology Institute with a world-class research facility focusing on innovating products and services for individual and institutional advancement.

As an agency under the Ministry of Communication and Digitalisation, our core mandate is to provide human and institutional capacity development, consultancy and undertake e-Government research into ICT, Electronics, Artificial Intelligence, Robotics and associated areas, and work to develop and apply research and innovative technologies for the socio-economic development of Ghana.

Latest Posts

Silent Cultural Destroys An Organization- Jessica Okwabi

Jessica Okwabi, Retail Director, textiles Ghana Ltd, has urged leaders of various organizations…

Fostering Inclusive Leadership And Work-Life Support To Empower Women

In a panel discussion on the question: “What are some of the best…

Communication Can Unlock Inclusion By Bridging The Digital Divide – Sophia Kudjorji

The Chief Corporate Communications Officer of Jospong Group of Companies, Sophia Kudjordi, has…

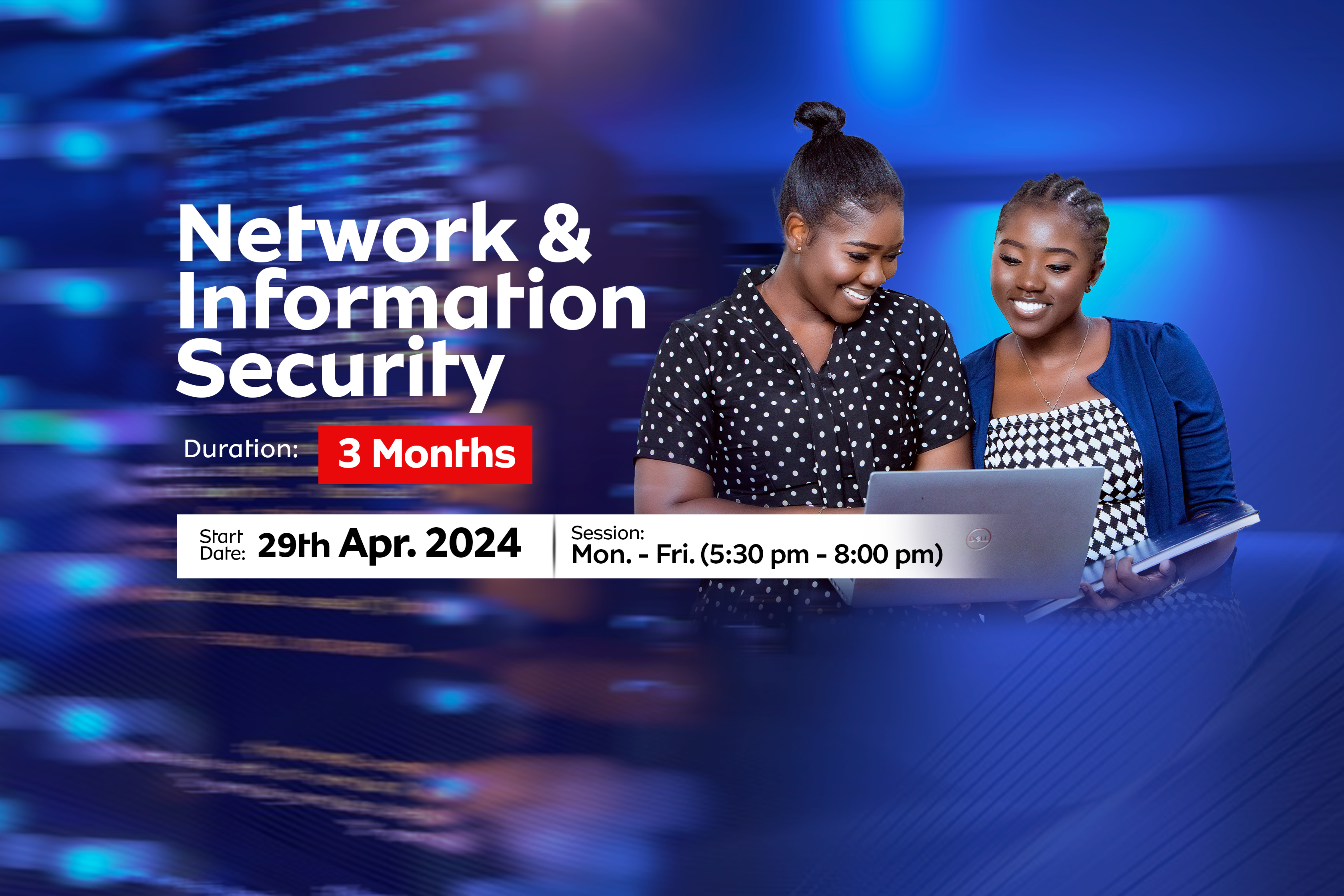

Upcoming Events

What Our Students Say

Felix Afeti

Founder and CEO of Liwel Consulting.The knowledge gained at AITI-KACE made it possible for me to become the Founder and CEO of Liwel Consulting. Rigorous studies done in Data Communication, Software Engineering, IT Security, delving more into programming languages, Operating Systems and Database among others has really equipped me to think ahead of my customers and provide solutions that make them competitive.

Amatu Salaam-Aidoo

Engineer at Ecobank E-processStudying at AITI-KACE enlightened my specialty in starting up a Tech Company in 2014 with Android and Java Enterprise. Though I did not have a Computer Science background, I was able to grasp enough knowledge in programming, operating systems, and database which boosted my confidence in taking up any role in the field. I have been enthused in the Tech World, worked with technological companies, and currently, I am an Engineer at Ecobank E-process.

Prince Boateng Asare

User Experience SpecialistI enrolled in AITI-KACE immediately after graduating from SHS. Enrolling at AITI-KACE was one of the best decisions I've ever made. Because all the training was hands-on and practical, it helped me gain technical and employability skills, which helped me stand out from the crowd. As a result, I got my first job six months after enrolling at AITI-KACE, even before going to university to pursue my degree. Today, I work and consult for a variety of organizations, including the AITI-KACE.

Joshua Opoku Agyemang

President, IoT Network hub GhanaAfter SHS, I took the risk of following my passion by enrolling at AITI-KACE instead of a tertiary institution and I must confess, I have never regretted making that decision. I am currently a co-founder and President of IoT Network Hub - Africa, an impact based organisation with over 20,000 members across 20 Africa countries on a mission to explore emerging technologies to solve Africa's problems. The programmes taught at AITI-KACE helped me solve complex problems, gave me critical thinking skills, exposed me to industry professionals and mentors, and also launched me into an exciting tech entrepreneurial career. Nevertheless, I doubled up as the president of Ghana STEM Network, on a mission to accelerate Ghana's transition to a more practical and sustainable education.